Friday, 27 May 2022

Perspectives on the Future of SP Networking: Intent and Outcome Based Transport Service Automation

Thursday, 26 May 2022

How to Contribute to Open Source and Why

Getting involved in the open-source community (especially early in your career) is a smart move for many reasons. When you help others, you almost always get help in return. You can make connections that can last your entire career, helping you down the road in ways you can’t anticipate.

In this article, we’ll cover more about why you should consider contributing to open source, and how to get started.

Why Should I Get Involved in Open Source?

Designing, building, deploying, and maintaining software is, believe it or not, a social activity. Our tech careers place us in a network of bright and empathetic professionals, and being in that network is part of what brings job satisfaction and career opportunities.

Nowhere in tech is this more apparent than in the world of free and open-source software (FOSS). In FOSS, we build in public, so our contributions are highly visible and done together with like-minded developers who enjoy helping others. And by contributing to the supply of well-maintained open-source software, we make the benefits of technology accessible around the world.

Where Should I Contribute?

If you’re looking to get started, then the first question you’re likely asking is: Where should I get started? A great starting place is an open-source project that you have used or are interested in.

Most open-source projects store their code in a repository on GitHub or GitLab. This is the place where you can find out what the project’s needs are, who the project maintainers are, and how you can contribute. Because of the collaborative and generous culture of FOSS, maintainers are often receptive to unsolicited offers of help. Often, you can simply reach out to a maintainer and offer to contribute.

For example, are you interested in contributing to Django? They make it very clear: We need your help to make Django as good as it can possibly be.

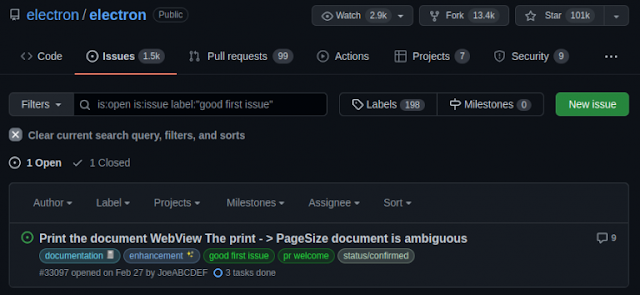

Finding known issues

Finding tasks for new contributors

The Contribution Process

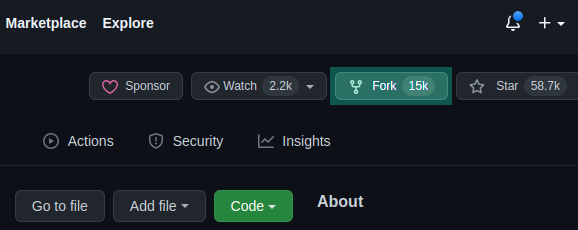

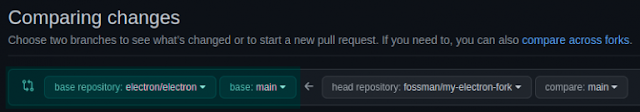

Fork the Project Repository

Solve the Issue

Submit a Pull Request

Wait for Feedback

Tuesday, 24 May 2022

Broadband Planning: Who Should Lead, and How?

As new Federal funding is released to help communities bridge the digital divide, you’ll need to gain a strong understanding of the solutions and deployment options available. Often overlooked, however, is the need to develop and commit to a realistic and inclusive broadband planning process. One that acknowledges the broad variety of stakeholders you’ll encounter and offers a realistic timeline to meet funding mandates. You’ll also need a strong leader. But who should lead and what should the process look like?

Why broadband planning is critical

As a licensed Landscape Architect and environmental planner, I’ve had the opportunity to work with state and local government leaders on a variety of infrastructure projects. In each case, we created and adhered to a detailed planning process. The projects ranged from a few acres to 23,000 acres, from roadways and utilities to commercial and residential communities. Even campuses and parks. In each case, sticking to a detailed planning process made things go smoother, resulting in a more successful project.

As critical infrastructure, broadband projects should adopt the same approach. You’ll benefit greatly by leveraging a well thought out collaborative planning model. Your stress levels will be reduced, your stakeholders happier, and the outcome more resilient and sustainable.Using a collaborative planning model helps accomplish this by:

◉ Establishing a clear vision and goals

◉ Limiting the scope of the project, preventing “scope-creep”

◉ Creating dedicated milestones to keep you on track

◉ Providing transparency for all stakeholders

◉ Setting a realistic timeline to better plan and promote your project.

Using a collaborative broadband planning process also creates a reference source for media outreach and promotion as milestones are reached. Lastly, by having a recorded process, funding mandates or data reporting can be more easily reported, keeping you and your team in compliance.

Who should lead broadband planning?

My involvement in traditional infrastructure planning has allowed me to experience first-hand how comfortable government personnel are in leading large-scale projects. Why are they? Because:

◉ They’re well versed in local ordinances, regulatory laws, and community standards

◉ They understand their community and its people

◉ They have established relationships that cross the public and private sector.

That’s why I, and many others in the IT industry, feel these same state and local government leaders can offer the most success leading broadband planning in their communities.

In addition, those in planning-specific positions are especially suited to do so, having unique skill sets that address:

◉ What type of infrastructure is needed and where to locate it

◉ Gathering realistic data via surveys, GIS mapping, and canvassing

◉ Construction issues that may serve as potential roadblocks or opportunities

◉ Understanding potential legal and maintenance issues.

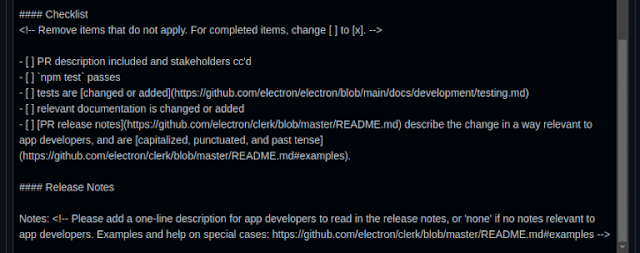

A realistic broadband planning process

To help our partners in the public and private sectors achieve greater success in their broadband efforts, we’ve created a new guide. It outlines a realistic, inclusive broadband planning process, including suggested timelines and milestones.

Funding for broadband

Sunday, 22 May 2022

How Cisco DNA Assurance Proves It’s ‘Not a Network Issue’

When something in your house breaks, it’s your problem. When something in your network breaks, it’s everyone’s problem. At least, that’s how it can feel when the sudden influx of support tickets, angry phone calls, and so on start rolling in. They quickly remind you that those numbers behind the traffic visualizations are more than numbers alone. They represent individuals. That includes individuals who don’t notice how the infrastructure supports them until suddenly… it’s not.

The adage that “time is money” applies here, and maybe better than anywhere else. Because when users on the network cannot do what they came to do, the value of their halted actions can add up quickly. That means reaction can’t be the first strategy for preserving a network. Instead, proactive measures that prevent problems (ha, alliteration) become first-order priorities.

That’s where Cisco DNA Center and Assurance comes in, and along with it, Leveraging Cisco Intent-Based Networking DNA Assurance (DNAAS) v2.0, the DNAAS course.

Let’s Start with Intent

This will come as no surprise to anyone, but networks are built for a purpose. From a top-down perspective, the network provides the infrastructure necessary to support business intent. Cisco DNA Center allows network admins and operators to make sure that the business intent is translated into network design and functionality. This ensures that the network is actually accomplishing what is needed. Cisco DNA Center has a load of tools, configs, and templates to make the network functional.

What is Cisco DNA Assurance?

Cisco DNA Assurance is the tool that keeps the network live. With it, we can use analytics, machine learning, and AI to understand the health of the intent-based network. DNA Assurance can identify problems before they manifest into critical issues. DNA Assurance allows us to gauge the overall health of the network across clients, devices, and applications and establish an idea of overall health. From there, we can troubleshoot and identify consistent issues compared to the baseline health of the network — before those issues have a significant impact. We don’t have to wait for an outage to act. (Or react.)

We’re no longer stuck in this red-light or green-light situation, where the network is either working or it’s not. When the light goes from green to yellow, we can start saying, “Hey, why is that happening? Let’s get to the root cause and fix it.”

Obviously, this was all-important before the big shift to hybrid work environments, but it’s even more critical now. When you have a problem, you can’t just walk down the hall to the IT guy, you’re sort of stranded on an island, hoping someone else can figure out what’s wrong. And on the other hand, when you’re the person tasked with fixing those problems, you want to know what’s going on as quickly as possible.

One customer I worked with installed Cisco DNA Assurance to ‘prove the innocence of the network.’ He felt that being able to quickly identify the network problem, especially if it was not necessarily a network issue, helped to get fixes done more quickly and efficiently. DNA Assurance helped to rule out the network or ‘prove it was innocent’ and allow him to narrow his troubleshooting focus.

Another benefit of DNA Assurance is that it’s built on Cisco’s expertise. 30+ years of experience with troubleshooting networks and devices have gone into developing Assurance. Its technology doesn’t just give you an overview of the network, it lets you know where things are going wrong and helps you discover solutions.

About the DNAAS course

Leveraging Cisco Intent-Based Networking DNA Assurance (DNAAS) v2.0 is the technology training course we developed to teach users about Cisco DNA Assurance. The course is designed to give a clear understanding of what DNA Assurance can do and to build a deep knowledge of the capabilities of the technology. It’s meant to give new users a firm handle on the technology while increasing the expertise of existing users and empowering them to further optimize their implementation of DNA Assurance.

One of the things we wanted to do was highlight some of the areas that users may not have touched on before. We give them a chance to experience those things and potentially roll them into tangible solutions on their own network. It’s all meant to be immediately actionable. Users can take this course and instantly turn back around and do something with the knowledge.

Labs are one of the ways that we’ve focused on bringing more of the experience to users who are taking the course. New users are going to interact with a real DNA Center instance, and experienced users are going to have the chance to see new configurations. We build out the fundamental skills necessary to use DNA Assurance, rather than focusing on strict use cases.

We treated it like learning to drive a car. We could teach you all the specifics about one highly specialized vehicle, or we could give you the foundational skills necessary to drive anything and allow you to work towards your specific needs.

Overall, students are going to expand their practical knowledge of DNA Assurance and gain actionable skills they can immediately use. DNAAS is an excellent entry into the technology for new users and an equally excellent learning opportunity for experienced users. It helps build important skills that help users to get the most out of the technology and keep their networks running smoothly.

Source: cisco.com

Saturday, 21 May 2022

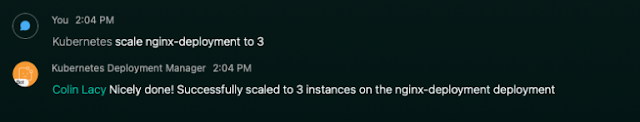

ChatOps: Managing Kubernetes Deployments in Webex

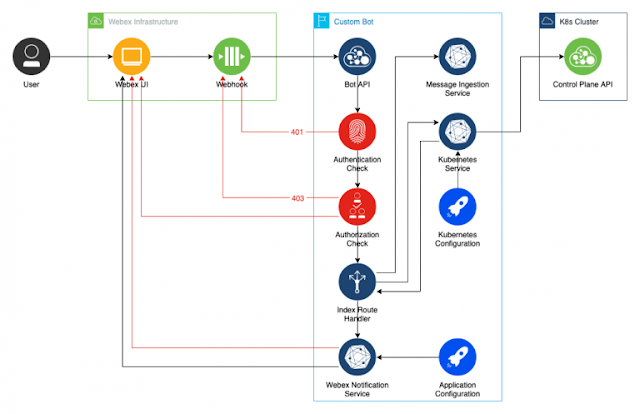

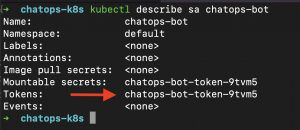

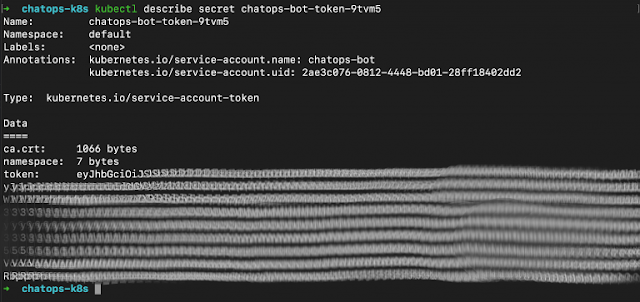

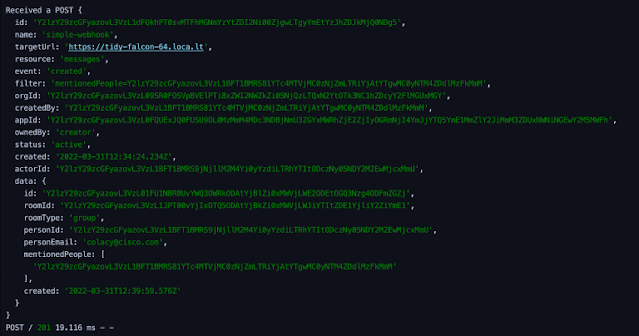

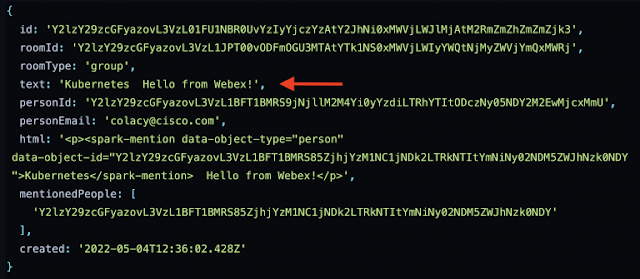

This is the third post in a series about writing ChatOps services on top of the Webex API. In the first post, we built a Webex Bot that received message events from a group room and printed the event JSON out to the console. In the second, we added security to that Bot, adding an encrypted authentication header to Webex events, and subsequently adding a simple list of authorized users to the event handler. We also added user feedback by posting messages back to the room where the event was raised.

In this post, we’ll build on what was done in the first two posts, and start to apply real-world use cases to our Bot. The goal here will be to manage Deployments in a Kubernetes cluster using commands entered into a Webex room. Not only is this a fun challenge to solve, but it also provides wider visibility into the goings-on of an ops team, as they can scale a Deployment or push out a new container version in the public view of a Webex room. You can find the completed code for this post on GitHub.

This post assumes that you’ve completed the steps listed in the first two blog posts. You can find the code from the second post here. Also, very important, be sure to read the first post to learn how to make your local development environment publicly accessible so that Webex Webhook events can reach your API. Make sure your tunnel is up and running and Webhook events can flow through to your API successfully before proceeding on to the next section. In this case, I’ve set up a new Bot called Kubernetes Deployment Manager, but you can use your existing Bot if you like. From here on out, this post assumes that you’ve taken those steps and have a successful end-to-end data flow.

Architecture

Let’s take a look at what we’re going to build: