In parallel, the Internet of Vehicles (IoV) and Vehicle-to-Everything (V2X) continue to evolve. The IoV encompasses a range of technologies and applications, including connected cars with internet access, Vehicle-to-Vehicle (V2V) and Vehicle-to-Cloud (V2C) communication, autonomous vehicles, and advanced traffic management systems. The timeline for widespread adoption of these technologies is presently unclear and will depend on a variety of factors; however, adoption will likely be a gradual process.

Over time, the number of Electronic Control Units (ECUs) in vehicles has seen a significant increase, transforming modern vehicles into complex networks on wheels. This surge is attributed to advancements in vehicle technology and the increasing demand for innovative features. Today, luxury passenger vehicles may contain 100 or more ECUs. Another growing trend is the virtualization of ECUs, where a single physical ECU can run multiple virtual ECUs, each with its own operating system. This development is driven by the need for cost efficiency, consolidation, and the desire to isolate systems for safety and security purposes. For instance, a single ECU could host both an infotainment system running QNX and a telematics unit running on Linux.

ECUs run a variety of operating systems depending on the complexity of the tasks they perform. For tasks requiring real-time processing, such as engine control or ABS (anti-lock braking system) control, Real-Time Operating Systems (RTOS) built on AUTOSAR (Automotive Open System Architecture) standards are popular. These systems can handle strict timing constraints and guarantee the execution of tasks within a specific time frame. On the other hand, for infotainment systems and more complex systems requiring advanced user interfaces and connectivity, Linux-based operating systems like Automotive Grade Linux (AGL) or Android Automotive are common due to their flexibility, rich feature sets, and robust developer ecosystems. QNX, a commercial Unix-like real-time operating system, is also widely used in the automotive industry, notably for digital instrument clusters and infotainment systems due to its stability, reliability, and strong support for graphical interfaces.

The unique context of ECUs present several distinct challenges regarding API security. Unlike traditional IT systems, many ECUs have to function in a highly constrained environment with limited computational resources and power, and often have to adhere to strict real-time requirements. This can make it difficult to implement robust security mechanisms, such as strong encryption or complex authentication protocols, which are computationally intensive. Furthermore, ECUs need to communicate with each other and external devices or services securely. This often leads to compromises in vehicle network architecture where a highcomplexity ECU acts as an Internet gateway that provides desirable properties such as communications security. On the other hand, in-vehicle components situated behind the gateway may communicate using methods that lack privacy, authentication, or integrity.

ECUs, or Electronic Control Units, are embedded systems in automotive electronics that control one or more of the electrical systems or subsystems in a vehicle. These can include systems related to engine control, transmission control, braking, power steering, and others. ECUs are responsible for receiving data from various sensors, processing this data, and triggering the appropriate response, such as adjusting engine timing or deploying airbags.

DCUs, or

Domain Control Units, are a relatively new development in automotive electronics, driven by the increasing complexity and interconnectivity of modern vehicles. A DCU controls a domain, which is a group of functions in a vehicle, such as the drivetrain, body electronics, or infotainment system. A DCU integrates several functions that were previously managed by individual ECUs. A DCU collects, processes, and disseminates data within its domain, serving as a central hub.

The shift towards DCUs can reduce the number of separate ECUs required, simplifying vehicle architecture and improving efficiency. However, it also necessitates more powerful and sophisticated hardware and software, as the DCU needs to manage multiple complex functions concurrently. This centralization can also increase the potential impact of any failures or security breaches, underscoring the importance of robust design, testing, and security measures.

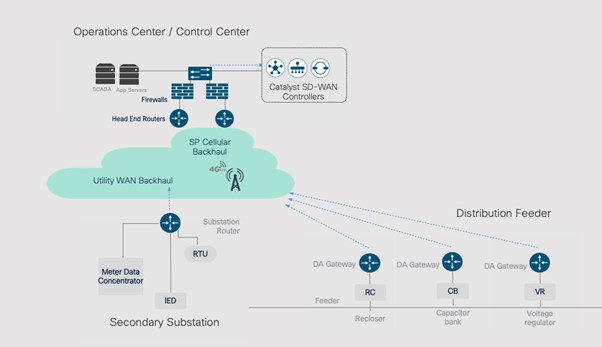

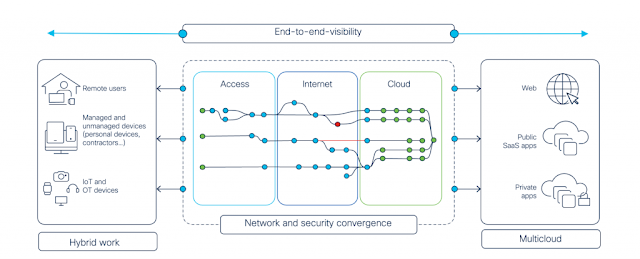

Direct internet connectivity is usually restricted to only one or two Electronic Control Units (ECUs). These ECUs are typically part of the infotainment system or the telematics control unit, which require internet access to function. The internet connection is shared among these systems and possibly extended to other ECUs. The remaining ECUs typically communicate via an internal network like the CAN (Controller Area Network) bus or

automotive ethernet, without requiring direct internet access.

The increasing complexity of vehicle systems, the growing number of ECUs, and pressure to bring cutting edge consumer features to market have led to an explosion in the number of APIs that need to be secured. This complexity is compounded by the long lifecycle of vehicles, requiring security to be maintained and updated over a decade or more, often without the regular connectivity that traditional IT systems enjoy. Finally, the critical safety implications of many ECU functions mean that API security issues can have direct and severe consequences for vehicle operation and passenger safety.

ECUs interact with cloud-hosted APIs to enable a variety of functionalities, such as real-time traffic updates, streaming entertainment, finding suitable charging stations and service centers, over-the-air software updates, remote diagnostics, telematics, usage based insurance, and infotainment services.

Open Season

In the fall of 2022,

security researchers discovered and disclosed vulnerabilities affecting the APIs of a number of leading car manufacturers. The researchers were able to remotely access and control vehicle functions, including locking and unlocking, starting and stopping the engine, honking the horn and flashing the headlights. They were also able to locate vehicles using just the vehicle identification number or an email address. Other vulnerabilities included being able to access internal applications and execute code, as well as perform account takeovers and access sensitive customer data.

The research is historically significant because security researches would traditionally avoid targeting production infrastructure without authorization (e.g., as part of a bug bounty program). Most researchers would also hesitate to make sweeping disclosures that do not pull punches, albeit responsibly. It seems the researchers were emboldened by recent revisions to the CFAA and this activity may represent a new era of Open Season Bug Hunting.

The revised CFAA, announced in May of 2022, directs that good-faith security research should not be prosecuted. Further, “Computer security research is a key driver of improved cybersecurity,” and “The department has never been interested in prosecuting good-faith computer security research as a crime, and today’s announcement promotes cybersecurity by providing clarity for good-faith security researchers who root out vulnerabilities for the common good.”

These vulnerability classes would not surprise your typical cybersecurity professional, they are fairly pedestrian. Anyone familiar with the

OWASP API Security Project will recognize the core issues at play. What may be surprising is how prevalent they are across different automotive organizations. It can be tempting to chalk this up to a lack of awareness or poor development practices, but the root causes are likely much more nuanced and not at all obvious.

Root Causes

Despite the considerable experience and skills possessed by Automotive OEMs, basic API security mistakes can still occur. This might seem counterintuitive given the advanced technical aptitude of their developers and their awareness of the associated risks. However, it’s essential to understand that, in complex and rapidly evolving technological environments, errors can easily creep in. In the whirlwind of innovation, deadlines, and productivity pressures, even seasoned developers might overlook some aspects of API security. Such issues can be compounded by factors like communication gaps, unclear responsibilities, or simply human error.

Development at scale can significantly amplify the risks associated with API security. As organizations grow, different teams and individuals often work concurrently on various aspects of an application, which can lead to a lack of uniformity in implementing security standards. Miscommunication or confusion about roles and responsibilities can result in security loopholes. For instance, one team may assume that another is responsible for implementing authentication or input validation, leading to vulnerabilities. Additionally, the context of service exposure, whether on the public internet or within a Virtual Private Cloud (VPC), necessitates different security controls and considerations. Yet, these nuances can be overlooked in large-scale operations. Moreover, the modern shift towards microservices architecture can also introduce API security issues. While microservices provide flexibility and scalability, they also increase the number of inter-service communication points. If these communication points, or APIs, are not adequately secured, the system’s trust boundaries can be breached, leading to unauthorized access or data breaches.

Automotive supply chains are incredibly complex. This is a result of the intricate network of suppliers involved in providing components and supporting infrastructure to OEMs. OEMs typically rely on tier-1 suppliers, who directly provide major components or systems, such as engines, transmissions, or electronics. However, tier-1 suppliers themselves depend on tier-2 suppliers for a wide range of smaller components and subsystems. This multi-tiered structure is necessary to meet the diverse requirements of modern vehicles. Each tier-1 supplier may have numerous tier-2 suppliers, leading to a vast and interconnected web of suppliers. This complexity can make it difficult to manage the cybersecurity requirements of APIs.

While leading vehicle cybersecurity standards like ISO/SAE 21434, UN ECE R155 and R156 cover a wide range of aspects related to securing vehicles, they do not specifically provide comprehensive guidance on securing vehicle APIs. These standards primarily focus on broader cybersecurity principles, risk management, secure development practices, and vehicle-level security measures. The absence of specific guidance on securing vehicle APIs can potentially lead to the introduction of vulnerabilities in vehicle APIs, as the focus may primarily be on broader vehicle security aspects rather than the specific challenges associated with API integration and communication.

Things to Avoid

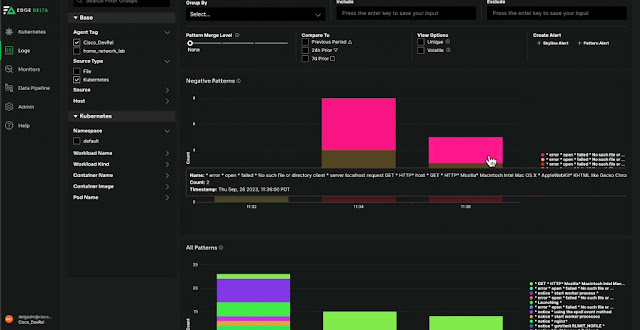

Darren Shelcusky of Ford Motor Company explains that while many approaches to API security exist, not all prove to be effective within the context of a large multinational manufacturing company. For instance, playing cybersecurity “whack-a-mole,” where individual security threats are addressed as they pop up, is far from optimal. It can lead to inconsistent security posture and might miss systemic issues. Similarly, the “monitor everything” strategy can drown the security team in data, leading to signal-to-noise issues and an overwhelming number of false positives, making it challenging to identify genuine threats. Relying solely on policies and standards for API security, while important, is not sufficient unless these guidelines are seamlessly integrated into the development pipelines and workflows, ensuring their consistent application.

A strictly top-down approach, with stringent rules and fear of reprisal for non-compliance, may indeed ensure adherence to security protocols. However, this could alienate employees, stifle creativity, and lose valuable lessons learned from the ground-up. Additionally, over-reliance on governance for API security can prove to be inflexible and often incompatible with agile development methodologies, hindering rapid adaptation to evolving threats. Thus, an effective API security strategy requires a balanced, comprehensive, and integrated approach, combining the strengths of various methods and adapting them to the organization’s specific needs and context.

Winning Strategies

Cloud Gateways

Today, Cloud API Gateways play a vital role in securing APIs, acting as a protective barrier and control point for API-based communication. These gateways manage and control traffic between applications and their back-end services, performing functions such as request routing, composition, and protocol translation. From a security perspective, API Gateways often handle important tasks such as authentication and authorization, ensuring that only legitimate users or services can access the APIs. They can implement various authentication protocols like OAuth, OpenID Connect, or JWT (JSON Web Tokens). They can enforce rate limiting and throttling policies to protect against Denial-of-Service (DoS) attacks or excessive usage. API Gateways also typically provide basic communications security, ensuring the confidentiality and integrity of API calls. They can help detect and block malicious requests, such as SQL injection or Cross-Site Scripting (XSS) attacks. By centralizing these security mechanisms in the gateway, organizations can ensure a consistent security posture across all their APIs.

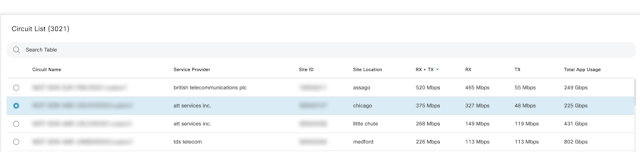

Cloud API gateways also assist organizations with API management, inventory, and documentation. These gateways provide a centralized platform for managing and securing APIs, allowing organizations to enforce authentication, authorization, rate limiting, and other security measures. They offer features for tracking and maintaining an inventory of all hosted APIs, providing a comprehensive view of the API landscape and facilitating better control over security measures, monitoring, and updates. Additionally, cloud API gateways often include built-in tools for generating and hosting API documentation, ensuring that developers and consumers have access to up-to-date and comprehensive information about API functionality, inputs, outputs, and security requirements. Some notable examples of cloud API gateways include Amazon API Gateway, Google Cloud Endpoints, and Azure API Management.

Authentication

In best-case scenarios, vehicles and cloud services mutually authenticate each other using robust methods that include some combination of digital certificates, token-based authentication, or challenge-response mechanisms. In the worst-case, they don’t perform any authentication at all. Unfortunately, in many cases, vehicle APIs rely on weak authentication mechanisms, such as a serial number being used to identify the vehicle.

Certificates

In certificate-based authentication, the vehicle presents a unique digital certificate issued by a trusted Certificate Authority (CA) to verify its identity to the cloud service. While certificate-based authentication provides robust security, it does come with a few drawbacks. Firstly, certificate management can be complex and cumbersome, especially in large-scale environments like fleets of vehicles, as it involves issuing, renewing, and revoking certificates, often for thousands of devices. Finally, setting up a secure and trusted Certificate Authority (CA) to issue and validate certificates requires significant effort and expertise, and any compromise of the CA can have serious security implications.

Tokens

In token-based authentication, the vehicle includes a token (such as a JWT or OAuth token) in its requests once its identity has been confirmed by the cloud service. Token-based authentication, while beneficial in many ways, also comes with certain disadvantages. First, tokens, if not properly secured, can be intercepted during transmission or stolen from insecure storage, leading to unauthorized access. Second, tokens often have a set expiration time for security purposes, which means systems need to handle token refreshes, adding extra complexity. Lastly, token validation requires a connection to the authentication server, which could potentially impact system performance or lead to access issues if the server is unavailable or experiencing high traffic.

mTLS

For further security, these methods can be used in conjunction with Mutual TLS (mTLS) where both the client (vehicle) and server (cloud) authenticate each other. These authentication mechanisms ensure secure, identity-verified communication between the vehicle and the cloud, a crucial aspect of modern connected vehicle technology.

Challenge / Response

Challenge-response authentication mechanisms are best implemented with the aid of a Hardware Security Module (HSM). This approach provides notable advantages including heightened security: the HSM provides a secure, tamper-resistant environment for storing the vehicle’s private keys, drastically reducing the risk of key exposure. In addition, the HSM can perform cryptographic operations internally, adding another layer of security by ensuring sensitive data is never exposed. Sadly, there are also potential downsides to this approach. HSMs can increase complexity throughout the vehicle lifecycle. Furthermore, HSMs also have to be properly managed and updated, requiring additional resources. Lastly, in a scenario where the HSM malfunctions or fails, the vehicle may be unable to authenticate, potentially leading to loss of access to essential services.

Hybrid Approaches

Hybrid approaches to authentication can also be effective in securing vehicle-to-cloud communications. For instance, a server could verify the authenticity of the client’s JSON Web Token (JWT), ensuring the identity and claims of the client. Simultaneously, the client can verify the server’s TLS certificate, providing assurance that it’s communicating with the genuine server and not a malicious entity. This multi-layered approach strengthens the security of the communication channel.

Another example hybrid approach could leverage an HSM-based challenge-response mechanism combined with JWTs. Initially, the vehicle uses its HSM to securely generate a response to a challenge from the cloud server, providing a high level of assurance for the initial authentication process. Once the vehicle is authenticated, the server issues a JWT, which the vehicle can use for subsequent authentication requests. This token-based approach is lightweight and scalable, making it efficient for ongoing communications. The combination of the high-security HSM challenge-response with the efficient JWT mechanism provides both strong security and operational efficiency.

JWTs (JSON Web Tokens) are highly convenient when considering ECUs coming off the manufacturing production line. They provide a scalable and efficient method of assigning unique, verifiable identities to each ECU. Given that JWTs are lightweight and easily generated, they are particularly suitable for mass production environments. Furthermore, JWTs can be issued with specific expiration times, allowing for better management and control of ECU access to various services during initial testing, shipping, or post-manufacturing stages. This means ECUs can be configured with secure access controls right from the moment they leave the production line, streamlining the process of integrating these units into vehicles while maintaining high security standards.

Source: cisco.com