AI Spoofing Detection Architecture and Deployment

Our previous blog post, Designing and Deploying Cisco AI Spoofing Detection, Part 1: From Device to Behavioral Model, introduced a hybrid cloud/on-premises service that detects spoofing attacks using behavioral traffic models of endpoints. In that post, we discussed the motivation and the need for this service and the scope of its operation. We then provided an overview of our Machine Learning development and maintenance process. This post will detail the global architecture of Cisco AISD, the mode of operation, and how IT incorporates the results into its security workflow.

Since Cisco AISD is a security product, minimizing detection delay is of significant importance. With that in mind, several infrastructure choices were designed into the service. Most Cisco AI Analytics services use Spark as a processing engine. However, in Cisco AISD, we use an AWS Lambda function instead of Spark because the warmup time of a Lambda function is typically shorter, enabling a quicker generation of results and, therefore a shorter detection delay. While this design choice reduces the computational capacity of the process, that has not been a problem thanks to a custom-made caching strategy that reduces processing to only new data on each Lambda execution.

Global AI Spoofing Detection Architecture Overview

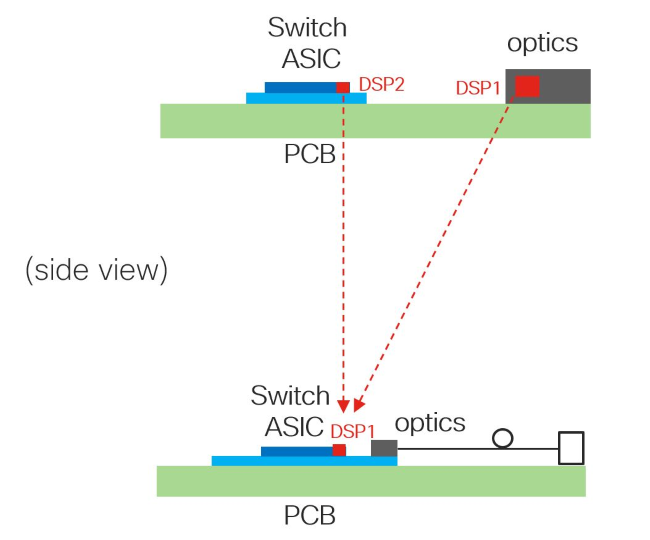

Cisco AISD is deployed on a Cisco DNA Center network controller using a hybrid architecture of an on-premises controller tethered to a cloud service. The service consists of on-premises processes as well as cloud-based components.

The on-premises components on the Cisco DNA Center controller perform several vital functions. On the outbound data path, the service continually receives and processes raw data captured from network devices, anonymizes customer PII, and exports it to cloud processes over a secure channel. On the inbound data path, it receives any new endpoint spoofing alerts generated by the Machine Learning algorithms in the cloud, deanonymizes any relevant customer PII, and triggers any Changes of Authorization (CoA) via Cisco Identity Services Engine (ISE) on affected endpoints.

The cloud components perform several key functions focused primarily on processing the high volume data flowing from all on-premises deployments and running Machine Learning inference. In particular, the evaluation and detection mechanism has three steps:

1. Apache Airflow is the underlying orchestrator and scheduler to initiate compute functions. An Airflow DAG frequently enqueues computation requests for each active customer to a queuing service.

2. As each computation request is dequeued, a corresponding serverless compute function is invoked. Using serverless functions enables us to control compute costs at scale. This is a highly efficient multi-step, compute-intensive, short-running function that performs an ETL step by reading raw anonymized customer data from data buckets and transforming them into a set of input feature vectors to be used for inference by our Machine Learning models for spoof detection. This compute function leverages some of cloud providers’ common Function as a Service architecture.

3. This function then also performs the model inference step on the feature vectors produced in the previous step, ultimately leading to the detection of spoofing attempts if they are present. If a spoof attempt is detected, the details of the finding are pushed to a database that is queried by the on-premises components of Cisco DNA Center and finally presented to administrators for action.