When will CML 2 support clustering?

This was the question we heard most when we released Cisco Modeling Labs (CML) 2.0 — and it was a great one, at that. So, we listened. CML 2.4 now offers a clustering feature for CML-Enterprise and CML-Higher Education licenses, which supports the scaling of a CML 2 deployment horizontally.

But what does that mean? And what exactly is clustering? Read on to learn about the benefits of Cisco Modeling Labs’ new clustering feature in CML 2.4, how clustering works, and what we have planned for the future.

CML clustering benefits

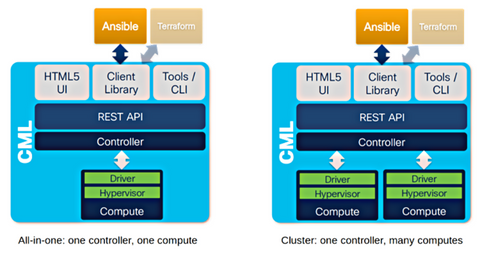

When CML is deployed in a cluster, a lab is no longer restricted to the resources of a single computer (the all-in-one controller). Instead, the lab can use resources from multiple servers combined into a single, large bundle of Cisco Modeling Labs infrastructure.

In CML 2.4, CML-Enterprise and CML-Higher Education customers who have migrated to a CML cluster deployment can leverage clustering to run larger labs with more (or larger) nodes. In other words, a CML instance can now support more users with all their labs. And when combining multiple computers and their resources into a single CML instance, users will still have the same seamless experience as before, with the User Interface (UI) remaining the same. There is no need to select what should run where. The CML controller handles it all behind the scenes, transparently!

How clustering works in CML v2.4 (and beyond)

A CML cluster consists of two types of computers:

◉ One controller: The server that hosts the controller code, the UI, the API, and the reference platform images

◉ One or more computes: Servers that run node Virtual Machines (VMs), for instance, the routers, switches, and other nodes that make up a lab. The controller controls these machines (of course), so users will not directly interact with them. Also, a separate Layer 2 network segment connects the controller and the computes. We chose the separate network approach for security (isolation) and performance reasons. No IP addressing or other services are required on this cluster network. Everything operates automatically and transparently through the machines participating in the cluster.

This intracluster network serves many purposes, most notably:

◉ serving all reference platform images, node definitions, and other files from the controller via NFS sharing to all computes of a cluster.

◉ transporting networking traffic in a simulated network (which spans multiple computes) on the cluster network between the computes or (in case of external connector traffic) to and from the controller.

◉ conducting low-level API calls from the controller to the computes to start/stop VMs, for example, and operating the individual compute.

Defining a controller or a compute during CML 2.4 cluster installation

During installation, and when multiple network interface cards (NICs) are present in the server, the initial setup script will ask the user to choose which role this server should take: “controller” or “compute.” Depending on the role, the person deploying the cluster will enter additional parameters.

For a controller, the important parameters are its hostname and the secret key, which computes will use to register with the controller. Therefore, when installing a compute, the hostname and key parameters serve to establish the cluster relationship with the controller.

Every compute that uses the same cluster network (and knows the controller’s name and secret) will then automatically register with that controller as part of the CML cluster.

CML 2.4 scalability limits and recommendations

We have tested clustering with a bare metal cluster of nine UCS systems, totaling over 3.5TB of memory and more than 630 vCPUs. On such a system, the largest single lab we ran (and support) is 320 nodes. This is an artificial limitation enforced by the maximum number of node licenses a system can hold. We currently support one CML cluster with up to eight computes.

Plans for future CML releases

While some limitations still exist in this release in terms of features and scalability, remember this is only Phase 1. This means the functionality is there, and future releases promise even more features, such as the:

◉ ability to de-register compute

◉ ability to put computes in maintenance mode.

◉ ability to migrate node VMs from one compute to another.

◉ central software upgrade and management of compute

Source: cisco.com

0 comments:

Post a Comment