Deploying and running data center infrastructure management – compute, networking, and storage – has traditionally been manual, slow, and arduous. Data center staffers are accustomed to doing a lot of command line configuration and spending hours in front of data center terminals. Hyperconverged Infrastructure (HCI) is the way out: It solves the problem of running storage, networking, and compute in a straightforward way by combining the provisioning and management of these resources into one package, and it uses software defined data center technologies to drive automation of these resources. At least in theory.

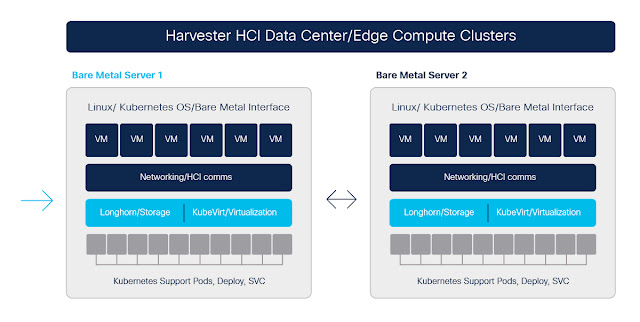

Recently, a colleague and I have been experimenting with Harvester, an open source project to build a cloud native, Kubernetes-based Hyperconverged Infrastructure tool for running data center and edge compute workloads on bare metal servers.

Harvester brings a modern approach to legacy infrastructure by running all data center and edge compute infrastructure, virtual machines, networking, and storage, on top of Kubernetes. It is designed to run containers and virtual machine workloads side-by-side in a data center, and to lower the total cost of data center and edge infrastructure management.

Why we need hyperconverged infrastructure

Many IT professionals know about HCI concepts from using products from VMWare, or by employing cloud infrastructure like AWS, Azure, and GCP to manage Virtual Machine applications, networking, and storage. The cloud providers have made HCI flexible by giving us APIs to manage these resources with less day-to-day effort, at least once the programming is done. And, of course, cloud providers handle all the hardware – we don’t need to stand up our own hardware in a physical location.

Multi-node Harvester cluster

However, most of the current products that support converged infrastructure tend to lock customers to using their company’s own technology, and they also usually come with licensing fees. Now, there is nothing wrong with paying for a technology when it helps you solve your problem. But single-vendor solutions can wall you off from knowing exactly how these technologies work, limiting your flexibility to innovate or react to issues.

If you could use a technology that combines with other technologies you are already required to know today – like Kubernetes, Linux, containers, and cloud native – then you could theoretically eliminate some of the headaches of managing edge compute / data centers, while also lowering costs.

This is what the people building Harvester are attempting to do.

Adapting to the speed of change

Cloud providers have made it easier to deploy and manage the infrastructure surrounding applications. But this has come at the expense of control, and in some cases performance.

HCI, which the cloud providers support and provide, gets us some control back. However, the recent rise of application containers, over virtual machines, changed again how infrastructure is managed and even thought of, by abstracting layers of application packaging, all while making that packaging lighter weight than last-generation VM application packaging. Containers also provide application environments that are faster to start up, and easier to distribute because of the decreased image sizes. Kubernetes takes container technologies like Docker to the next level by adding in networking, storage, and resource management between containers, in an environment that connects everything together. Kubernetes allows us to integrate microservice applications with automation and speedy deployments.

Kubernetes offers an improvement on HCI technologies and methodologies. It provides a better way for developers to create cloud agnostic applications, and to spin up workloads in containers more quickly than traditional VM applications. Kubernetes did not aim to replace HCI, but it did make a lot of the goals of software deployment and delivery simpler, from an HCI perspective.

In a lot of environments, Kubernetes runs inside VMs. So you still need external HCI technology to manage the underlying infrastructure for the VMs that are running Kubernetes. The problem now is that if you want to run your application in Kubernetes containers on infrastructure you have control of, you have different layers of HCI to support. Even if you get better application management with Kubernetes, infrastructure management becomes more complex. You could try to use vanilla Kubernetes for every part of your edge-compute / data center stack and run it as your bare metal operating system instead of traditional HCI technologies, but you have to be ok migrating all workloads to containers, and in some cases that is a high hurdle to clear, not to mention the HCI networking that you will need to migrate over to Kubernetes.

The good news is that there are IoT and Edge Compute projects that can help. The

Rancher organization, for example is creating a lightweight version of Kubernetes, k3s, for IoT compute resources like the Raspberry Pi and Intel NUC computers. It helps us push Kubernetes onto more bare metal infrastructure. Other orgs, like

KubeVirt, have created technologies to run virtual machines inside containers and on top of Kubernetes, which has helped with the speed of deployment for VMs, which then allow us to use Kubernetes for our virtual networking layers and all application workloads (container and VMs). And other technology projects, like

Rook and

Longhorn, help with persistent storage for HCI through Kubernetes.

If only these could combine into one neat package, we would be in good shape.

Hyperconverged everything

Knowing where we have come from in the world of Hyperconverged Infrastructure for our Data Centers and our applications, we can now move on to what combines all these technologies together. Harvester packages up k3s (light weight Kubernetes), KubeVirt (VMs in containers), and Longhorn (persistent storage) to provide Hyperconverged Infrastructure for bare metal compute using cloud native technologies, and wraps an API / Web GUI bow on it to for convenience and automation.

Source: cisco.com

0 comments:

Post a Comment