In today's rapidly evolving IT landscape, network automation has become a cornerstone for efficiency, scalability, and agility. Professionals looking to validate their expertise in this critical area often turn to the Cisco 350-901 AUTOCOR exam, a challenging yet highly rewarding certification. This exam, officially known as Cisco Designing, Deploying, and Managing Network Automation Systems, is your gateway to demonstrating proficiency in automating enterprise networks.

While the prospect of taking such a comprehensive exam can seem daunting, with the right preparation, confidence is well within reach. This article serves as your ultimate guide, demystifying the path to success and highlighting how an effective Cisco 350 901 practice test can be your most powerful tool. We'll walk you through the exam's intricacies, share invaluable preparation tips, and show you exactly how to leverage practice exams to pass confidently.

Understanding the Cisco 350-901 AUTOCOR Exam

Before diving into preparation strategies, it's crucial to have a clear understanding of what the Cisco 350-901 AUTOCOR exam entails. This exam is a specialist-level assessment designed to validate a candidate's skills in network automation.

Exam Overview: Cisco Designing, Deploying, and Managing Network Automation Systems

The 350-901 AUTOCOR exam covers a broad spectrum of topics essential for automating modern networks. Here's a quick look at its core details:

- Exam Name: Cisco Designing, Deploying, and Managing Network Automation Systems

- Exam Code: 350-901 AUTOCOR

- Exam Price: $400 USD

- Duration: 120 minutes

- Number of Questions: 90-110 (typically a mix of multiple-choice, drag-and-drop, and simlet questions)

- Passing Score: Variable (approximately 750-850 out of 1000)

Candidates are expected to demonstrate proficiency in various aspects of network automation, from foundational concepts to advanced deployment and management of automated systems. The exam is challenging, requiring both theoretical knowledge and practical understanding.

Who Should Take This Exam?

The Cisco 350-901 exam is ideal for network engineers, software developers, and automation specialists who are involved in designing, deploying, and managing network automation solutions. If you're looking to formalize your expertise in network programmability and automation, or aiming to achieve higher-level Cisco certifications, this exam is a perfect fit. It signifies a strong understanding of modern network architectures and automation tools.

The Cisco Network Automation Certification Path

The 350-901 AUTOCOR exam is a core component of the CCNP Enterprise certification, specifically for the Cisco Certified DevNet Professional track. Passing this exam, along with a core exam like 350-401 ENCOR, leads to the prestigious CCNP Enterprise certification. It also counts towards the Cisco Certified DevNet Professional certification. Understanding the broader landscape of Cisco network automation certifications helps candidates align their study efforts with their long-term career goals.

Why a Cisco 350 901 Practice Test is Your Best Ally

Effective preparation for the Cisco 350-901 exam goes beyond simply studying documentation. The strategic use of a robust Cisco 350 901 practice test is paramount for several compelling reasons. These practice tests are not just about memorizing answers; they are powerful tools designed to optimize your study process and boost your confidence.

To truly understand the depth of knowledge required for this certification, you can explore comprehensive resources that offer valuable Cisco 350-901 sample questions and answers. These examples give you a realistic preview of the question types and complexity you'll face on exam day.

Simulating the Real Exam Environment

One of the primary benefits of a quality Cisco 350 901 practice test is its ability to replicate the actual exam environment. This includes the time constraints, question formats, and overall interface. By regularly exposing yourself to this simulation, you minimize surprises on exam day, allowing you to focus purely on the questions at hand rather than adapting to unfamiliar settings.

Identifying Your Knowledge Gaps

Practice tests are diagnostic tools. They don't just tell you what you know; more importantly, they reveal what you don't know. After taking a Cisco 350 901 practice test, a detailed score report often highlights specific areas where you struggled. This allows you to pinpoint your weak points and allocate your study time more efficiently, ensuring you cover all necessary topics comprehensively.

Building Confidence and Reducing Anxiety

Success in any exam is as much about mental preparedness as it is about knowledge. Consistent performance on a Cisco 350 901 practice test builds significant confidence. As you see your scores improve, your self-assurance grows, which can be a critical factor in mitigating exam anxiety. Feeling prepared and confident allows you to approach the actual exam with a clear and focused mind.

Mastering Time Management

The Cisco 350-901 exam has a strict 120-minute time limit for 90-110 questions, meaning you have roughly a minute per question. This necessitates excellent time management skills. Regular practice with timed tests helps you develop a rhythm, learn to pace yourself, and make informed decisions about when to spend more time on a question and when to move on. This skill is invaluable for ensuring you attempt all questions within the allocated period.

Deconstructing the 350-901 Exam Topics (Syllabus)

To effectively prepare, it's essential to break down the official Cisco Designing, Deploying, and Managing Network Automation Systems exam topics. Understanding the weightage and specifics of each section will guide your study efforts. You can find the detailed blueprint on the Cisco Learning Network for AUTOCOR exam topics.

Network Automation (30%)

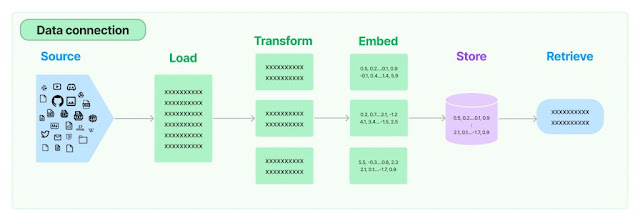

This foundational section covers the core concepts and principles of network automation. It includes understanding data models (YANG, JSON), API-based automation (RESTCONF, NETCONF), common automation protocols, and the role of network programmability. You'll need to grasp how to interact with network devices using programmatic interfaces and understand the benefits of automation in network operations. Key topics also involve understanding Python for network automation, especially dealing with libraries and scripts.

Infrastructure as Code (30%)

Infrastructure as Code (IaC) is a critical paradigm in modern IT, and this section focuses on applying its principles to networking. Topics include using version control systems (like Git) for network configurations, understanding configuration management tools (such as Ansible, Puppet, Chef, SaltStack), and deploying network services through automated workflows. Expect questions on playbooks, modules, and how to define network states using code. A strong grasp of Git operations and common IaC tools is essential here.

Operations (20%)

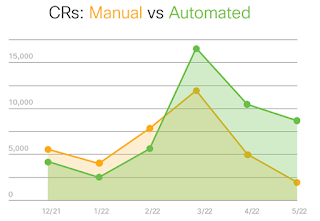

This section delves into the operational aspects of automated networks. It covers monitoring, logging, and troubleshooting automated systems. You'll need to understand how to collect telemetry data, analyze network events, and implement automated remediation strategies. Concepts like NetFlow, IPFIX, syslog, SNMP, and modern streaming telemetry are crucial. The ability to troubleshoot issues within an automated environment, including debugging automation scripts and identifying root causes in automated deployments, is key.

AI in Automation (20%)

The final section explores the emerging role of Artificial Intelligence (AI) and Machine Learning (ML) in network automation. This includes understanding intent-based networking concepts, how AI can be used for network analytics, predictive maintenance, and optimizing network performance. While it might not require deep AI programming knowledge, understanding the application of AI/ML concepts to enhance network automation and decision-making is vital. This also touches on concepts like network assurance and the use of big data analytics for operational insights.

Crafting Your Cisco 350-901 Study Plan

A well-structured study plan is the backbone of successful exam preparation. Beyond simply taking a Cisco 350 901 practice test, a comprehensive strategy integrates various resources and techniques to ensure you're fully prepared.

Start with the Official Exam Blueprint

Your first and most important step is to thoroughly review the Cisco 350-901 exam blueprint. This document, available on Cisco's official certification page, provides an exhaustive list of all topics and sub-topics you need to master. Treat it as your primary checklist, ensuring every item is covered in your study schedule. The official page for the exam is an invaluable resource: Cisco 350-901 Official Exam Page.

Leveraging the Best Cisco 350-901 Study Guide

Investing in the best Cisco 350-901 study guide is highly recommended. Look for guides that are up-to-date with the latest exam blueprint, offer clear explanations, and include practical examples. Complement this with official Cisco documentation, whitepapers, and relevant RFCs to deepen your understanding. A good study guide will structure the content logically, making complex topics easier to digest.

Hands-on Experience is Key

Network automation is a practical skill. Reading about it isn't enough; you need to do it. Set up a lab environment, whether it's virtual (using EVE-NG, GNS3, or DevNet sandboxes) or physical. Practice deploying configurations, writing automation scripts, and interacting with network devices using APIs. Focus on implementing Cisco 350-901 network automation concepts, tackling Cisco 350-901 network programmability questions, and getting familiar with Cisco 350-901 automation tools exam questions through actual usage. Practical experience solidifies theoretical knowledge.

Incorporating Cisco Designing, Deploying, and Managing Network Automation Systems Training

For some, formal training provides the structured learning environment needed to excel. Consider enrolling in official Cisco Designing, Deploying, and Managing Network Automation Systems training courses. These courses are often taught by certified instructors, offer hands-on labs, and cover the exam topics in depth. While an investment, they can significantly accelerate your learning process and provide valuable insights.

Regular Review and Self-Assessment

Integrate regular review sessions into your study plan. After covering a topic, use flashcards, create summary notes, or discuss it with a study partner. Critically, perform self-assessments using a Cisco 350 901 practice test, sample questions, or quizzes. Look for resources like a Cisco 350-901 sample questions pdf or even a Cisco 350-901 practice exam free version to gauge your readiness and track progress. This iterative process of study, practice, and review is crucial for mastery.

Maximizing Your Preparation with Cisco 350 901 Practice Questions and Answers

Once you have a solid understanding of the exam content, the next crucial step is to engage intensively with Cisco 350 901 practice questions and answers. This focused practice is where theoretical knowledge transforms into exam-ready performance.

The Power of Repetition

Repetition, when done correctly, is a powerful learning tool. Regularly tackling practice questions helps reinforce concepts, improves recall, and familiarizes you with the question styles used in the actual exam. Don't just answer once and move on; revisit questions you struggled with, understand the reasoning, and re-attempt them after further study.

Analyzing Explanations, Not Just Answers

The true value of Cisco 350-901 practice questions and answers lies not merely in getting the correct answer, but in understanding the explanation behind each option. A good practice test will provide detailed rationales for both correct and incorrect answers. Analyze these explanations thoroughly. This process helps you grasp the underlying concepts more deeply and avoids making similar mistakes in the future. It's an essential part of a comprehensive Cisco 350-901 exam review.

Varying Your Sources

While having a primary source for your Cisco 350-901 practice questions and answers is good, try to incorporate questions from various reputable providers. Different sources might phrase questions differently, covering the same topic from slightly varied angles. This broadens your exposure and prevents over-reliance on a single style of questioning. However, ensure all sources align with the official Cisco blueprint to avoid irrelevant study.

Tracking Your Progress

Maintain a log of your practice test scores. Note down the areas where you consistently perform well and those where you struggle. Tracking your progress allows you to visually see your improvement over time, which can be highly motivating. It also helps in identifying persistent weak areas that require additional study or a different approach. Focus on improving your understanding of core concepts like Cisco 350-901 network automation concepts, not just memorizing answers.

Conquering the Exam Day: Tips for Success

All your hard work preparing and using a Cisco 350 901 practice test culminates on exam day. Strategic planning for this day can significantly impact your performance.

Before the Exam

Ensure you get a good night's sleep before the exam. A well-rested mind performs better. Confirm your exam appointment details, location, and required identification. If taking the exam remotely, test your setup well in advance to avoid technical glitches. Understand the Cisco 350-901 certification cost and ensure all registration fees are settled to avoid any last-minute surprises. Do a light review of your notes, but avoid cramming. Trust the preparation you've already done.

During the Exam

Upon starting, quickly scan through the number of questions and the time remaining to mentally allocate your time. Read each question carefully, paying close attention to keywords and what is being asked. Sometimes, a question might ask for the 'least' or 'most' appropriate answer. If you encounter a difficult question, flag it and move on. Don't get stuck. Answer all questions, even if you have to make an educated guess, as there's typically no penalty for incorrect answers. Keep an eye on the clock and aim to finish with a few minutes to spare for a quick review. While the Cisco 350-901 exam passing score is variable, aim for consistent high scores in your practice tests to build confidence for the real thing. It's good to be aware of the Cisco Designing, Deploying, and Managing Network Automation Systems exam difficulty; it's a challenging exam, so stay focused and calm.

After the Exam

Regardless of the immediate outcome, take time to reflect on your experience. What went well? What could have been better? This feedback is invaluable for future certification endeavors. If you passed, celebrate your achievement! If not, don't be discouraged. Review the score report to identify your weak areas and formulate a revised study plan for your next attempt. Remember that the journey to certification is a learning process.

Your Journey Beyond the 350-901: Network Automation Career Path

Passing the Cisco 350-901 AUTOCOR exam is a significant milestone, opening doors to advanced roles and opportunities in the field of network automation. It validates your expertise in designing, deploying, and managing automated network systems, a skill set highly sought after in the industry.

What's Next After Passing?

For many, the 350-901 exam is a stepping stone. If you passed the 350-901 AUTOCOR, you've completed one of the requirements for both the CCNP Enterprise and Cisco Certified DevNet Professional certifications. Consider pursuing the core exams like 350-401 ENCOR for CCNP Enterprise, or other DevNet specialist exams to round out your professional profile. The knowledge gained from this exam will directly apply to these further certifications, strengthening your overall understanding of modern network architectures.

Impact on Your Career

Certified professionals with expertise in network automation are in high demand. This certification demonstrates your ability to streamline network operations, improve efficiency, and reduce human error, making you an invaluable asset to any organization. It can lead to roles such as Network Automation Engineer, DevOps Engineer, Cloud Network Engineer, or Solution Architect. The skills validated by this exam are directly transferable to real-world scenarios, boosting your employability and earning potential.

Continuous Learning in Network Automation

The field of network automation is constantly evolving. New tools, technologies, and methodologies emerge regularly. Achieving the 350-901 certification is an excellent foundation, but continuous learning is vital to stay relevant. Engage with industry communities, attend webinars, read blogs, and experiment with new automation platforms. Your certification signifies your current expertise, but your commitment to ongoing learning will define your long-term success in this dynamic domain.

Frequently Asked Questions About the Cisco 350-901 Exam

1. What is the Cisco 350-901 AUTOCOR exam about?

The Cisco 350-901 AUTOCOR exam, officially known as Cisco Designing, Deploying, and Managing Network Automation Systems, assesses a candidate's knowledge of implementing network automation solutions, including programmatic interaction with networks, automation tools, and associated technologies like Infrastructure as Code and AI in automation.

2. How much does the Cisco 350-901 certification cost?

The current exam price for the Cisco 350-901 AUTOCOR is $400 USD. This fee covers the cost of taking the exam at a Pearson VUE testing center or through their online proctoring service.

3. What is a good Cisco 350-901 exam passing score?

Cisco exam passing scores are variable and typically range from 750-850 out of 1000. It's important to aim for a thorough understanding of all topics rather than just a minimum score. A consistent score above 800 in your Cisco 350 901 practice test usually indicates readiness.

4. Where can I find a reliable Cisco 350 901 practice test?

Reliable Cisco 350 901 practice test resources can be found from reputable certification training providers. Look for platforms that offer detailed explanations for answers, align with the official exam blueprint, and provide a realistic exam simulation. Often, the best study guides include practice questions, and online learning platforms specialize in comprehensive practice exams.

5. How long should I study for the Cisco 350-901 exam?

The study duration for the Cisco 350-901 exam varies based on your existing knowledge and experience with network automation. On average, candidates dedicate 2-4 months of focused study, including significant hands-on lab time and regular practice with a Cisco 350 901 practice test, to cover all the exam topics comprehensively and be prepared for the Cisco Designing, Deploying, and Managing Network Automation Systems exam difficulty.

Conclusion

The Cisco 350-901 AUTOCOR exam is a formidable challenge, but one that is entirely conquerable with a strategic approach and the right tools. By understanding the exam blueprint, engaging in rigorous hands-on practice, and critically, by leveraging a high-quality Cisco 350 901 practice test, you can significantly enhance your chances of success.

Remember, the goal is not just to pass, but to truly master the concepts of network automation. A robust practice test environment provides the simulation, diagnostic feedback, and confidence boost necessary to excel. So, prepare diligently, practice smartly, and walk into your exam knowing you've done everything to equip yourself for success. Your journey to becoming a certified expert in network automation starts with a confident first step. Explore the full spectrum of Cisco Automation certifications to plan your future career advancements.