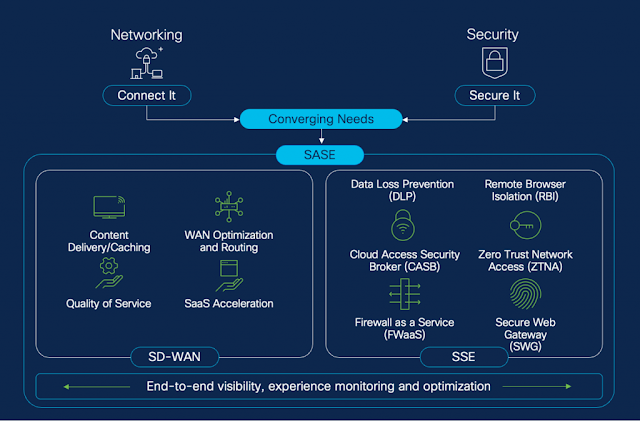

When it comes to protecting a hybrid workforce, while simultaneously safeguarding internal resources from external threats, cloud-delivered security with Security Service Edge (SSE) is seen as the preferred method.

Enterprise Strategy Group (ESG) recently conducted a study of IT and security practitioners, evaluating their views on a number of topics regarding SSE solutions. Respondents were asked for their views on security complexity, user frustration, remote/hybrid work challenges, and their take on the expectations vs. reality when it came to the benefits of SSE. The results provide critical insights into how to protect a hybrid workforce, streamline security procedures, and enhance end-user satisfaction. Some of the highlights from their report include:

- Remote/hybrid workers were found to be the biggest source of cyber-attacks with 44% coming from them.

- Organizations are moving towards cloud-delivered security, as 75% indicated a preference for cloud-delivered cybersecurity products vs. on-premises security tools.

- SSE is delivering value, with over 70% of respondents stating they achieved at least 10 key benefits involving operational simplicity, improved security, and better user experience.

- SecOps teams report significantly fewer attacks, with 56% stating they observed over a 20% reduction in security incidences using SSE.

Delving further into the report, ESG provides details explaining why organizations have gravitated towards SSE and achieved significant success. SSE simplifies the security stack, substantially improving protection for remote users, while enhancing hybrid worker satisfaction with easier logins and better performance. It helps avert numerous challenges, from stopping malware spread to shrinking the attack surface.

Here’s some of the added benefits that SSE users see.

Overcome cybersecurity complexity

Among the respondents, more than two-thirds describe their current cybersecurity environment as complex or extremely complex. The top cited source (83%) involved the accelerated use of cloud-based resources and the need to secure access, protect data, and prevent threats. The second most common source of complexity was the number of security point products required (78%) with an average of 63 cybersecurity tools in use. Number three on the hit parade was the need for more granular access policies to support zero trust principles (77%) and the need to apply least privilege policies with user, application, and device controls. Other factors mentioned by wide margins include an expanded attack surface from work-from-home employees, use of unsanctioned applications and a growing number of more sophisticated attacks.

Organizations can offset these challenges by deploying SSE. These protective services reside in the cloud, between the end-user and the cloud-based resources they utilize as opposed to on-premises methods that are ‘out of the loop’. SSE consolidates many security features including Zero Trust Network Access (ZTNA), Secure Web Gateway (SWG), Firewall as a Service (FWaaS) and Cloud Access Security Broker (CASB) with one dashboard to simply operations. With advanced ZTNA with zero trust access (ZTA) authorized users can only connect to specific, approved apps. Discovery and lateral movement by compromised devices or unauthorized users are prevented.

Enhance end-user experience

The report found current application access processes often result in user frustration. Respondents reported their workforce uses a collective average of 1,533 distinct business applications. As these apps typically reside in the cloud, secure usage is no longer straightforward. To support zero trust, many organizations have shifted to more stringent authentication and verification tasks. While good from a security perspective, 52% of respondents indicated their users were frustrated with this practice. Similarly, 50% mentioned user frustration at the number of steps to get to the application they need and 45% at having to choose the method of connection based on the application.

Performance was also cited as an issue, with 43% indicating user frustration. More than one-third (35%) indicated that latency impacting the end-user experience. In some cases, this leads to users circumventing the VPN, which was cited by 38% of respondents. Such user noncompliance can introduce additional risk and the potential for malicious actors to view traffic flows.

VPNs were found to be poorly suited to supporting zero trust principles. They do not allow for granular access policies to be applied (mentioned by 31% of respondents) and are visible on the public internet, allowing attackers a clear entry point to the network and corporate applications (cited by 22%).

By implementing SSE with ZTA administrators can give remote users the same type of straightforward, performant experience as when they are in the office, without IT teams being forced to make a trade-off between security and user satisfaction. ZTA allows users to access all, not some, of the potentially thousands of apps needed. ZTA provides a transparent and seamless ‘one-click’ process to login. Backed by advanced protocols, users can obtain HTTP3 level speeds with reduced latency and more resilient connections. Ultra-granular access with one user to one app ‘micro tunnels’ ensure security while providing resource obfuscation and preventing lateral movement.

Solve hybrid work security challenges

It’s challenging to secure hybrid workforces that include remote workers, contractors, and partners. This new hyper-distributed landscape results in an expanded attack surface, as well as an increase in device types and inconsistent performance. Respondents cited the need to ensure malware does not spread from remote devices to corporate locations and resources (55%) as their most critical concern. The second biggest issue mentioned is the need to check device posture (51%) consistently and continuously. In third place, IT listed defending an expanding attack surface due to users directly accessing cloud-based apps (50%). Other items of note include the lack of visibility into unsanctioned apps (45%) and protecting users as they access cloud apps (40%).

SSE is tailor-made to address these roadblocks to security. Multiple defense-in-depth features from the cloud ensure malware and other malicious activity is routed out and prevents infection before it starts. Continuous, rich posture checks with contextual insights ensure device compliance. Thorough user identification and authentication procedures combined with granular access control policies prevent unauthorized resource access. CASB provides visibility into what applications are being requested and controls access. Remote Browser Isolation (RBI), DNS-filtering, FWaaS and other features protect end users as they use Internet or public cloud services.

Benefits derived through SSE

The survey clearly demonstrates that many organizations who are utilizing SSE solutions are reaping a broad set of benefits. These can be categorized in three pillars: increased user and resource security, simplified operations, and enhanced user experience. When respondents were asked if they felt their initial expected benefits were subsequently realized once SSE was deployed, over 73% reported achieving at least ten critical advantages. A partial list of these factors include:

- Simplified security operations/increased efficiency with ease of configuration and management

- Improved security specifically for remote/hybrid workforce

- Enacting principles of least privilege by allowing remote access only to approved resources

- Superior end-user access experience

- Prevention of malware spread from remote users to corporate resources

- Increased visibility into remote device posture assessment

Cisco leads the way in SSE

Cisco’s SSE solution goes way beyond standard protection. In addition to the four principal features previously listed (ZTNA, SWG, FWaaS, CASB), our Cisco Secure Access includes RBI, DNS filtering, advanced malware protection, Intrusion Prevention System (IPS), VPN as a Service (VPNaaS), multimode Data Loss Prevention (DLP), sandboxing and digital experience monitoring (DEM). This feature rich array is backed by the industry-leading threat intelligence group, Cisco Talos, giving security teams a distinct advantage in detecting and preventing threats.

With Secure Access:

- Authorized users can access any app, including non-standard or custom, regardless of the underlying protocols involved.

- Security teams can employ a safer, layered approach to security, with multiple techniques to ensure granular access control.

- Confidential resources remain hidden from public view with discovery and lateral movement prevented.

- Performance is optimized with the use of next-gen protocols, MASQUE and QUIC, to realize HTTP3 speeds

- Administrators can quickly deploy and manage with a unified console, single agent and one policy engine.

- Compliance is maintained via continuous in-depth user authentication and posture checks.

Source: cisco.com