When new hardware is ordered and it arrives on site, it’s an exciting time. New hardware! New software! … But new challenges too! But the age-old challenge of getting new devices on the network doesn’t need to be one of them. Sitting in the lab pre-provisioning devices is no longer required if you’re using Cisco IOS XE, because of features like Cisco Network Plug-n-Play (PnP) and Zero Touch Provisioning (ZTP). PnP is the premium solution made possible with Cisco DNA Center, while Zero Touch Provisioning (ZTP) is for the do-it-yourself customers who don’t mind investing more time in configuring and maintaining the infrastructure required to bootstrap devices. IOS XE runs on the enterprise hardware and software platforms that includes Catalyst 9000 series of switches and wireless LAN controllers, and the ISR 1000 and 4000 series routers.

ZTP works when the DHCP client on the IOS XE device gets a DHCP Offer that includes option 67. This options, also called the “bootfile name,” tells the device which file to load and from where it’s available. Lets look at a few examples of how we can configure this on either the ISC DHCP Server or on the Cisco IOS DHCP Server.

The configuration example for the Linux ISC DHCP dhcpd.conf is below:

subnet 192.168.69.0 netmask 255.255.255.0 {

option bootfile-name "http://192.168.69.1/ztp.py"; }

For the Cisco IOS DHCP Server:

ip dhcp pool ZTP_DEMO

network 192.168.69.0 255.255.255.0

option 67 ascii http://192.168.69.1/ztp.py

In these examples option 67 points to a HTTP URL and a Python script. When the device see’s the python file in the option 67 then it downloads and executes it during bootup when no other configuration is present and without any manual user intervention. Pretty neat!

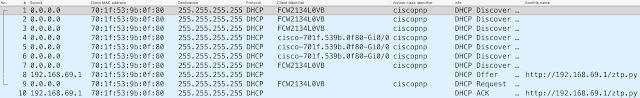

Next let’s take a look at a packet capture, which shows interesting things.

You might notice that the Client Identifier (option 61) is not consistent between DHCP Discovers. This is not a mistake, or multiple devices, but actually the intended behavior. Since IOS XE 16.8 the Client Identifier alternates between the device serial number, and the MAC address of the management port. When the DHCP server issues the DHCP Offer in packet 8, the bootfile-name (option 67) is set to http://192.168.69.1/ztp.py – This is the Python script that will be downloaded and run once the device is ready.

DHCP Configuration to enable Zero Touch Provisioning

ZTP works when the DHCP client on the IOS XE device gets a DHCP Offer that includes option 67. This options, also called the “bootfile name,” tells the device which file to load and from where it’s available. Lets look at a few examples of how we can configure this on either the ISC DHCP Server or on the Cisco IOS DHCP Server.

The configuration example for the Linux ISC DHCP dhcpd.conf is below:

subnet 192.168.69.0 netmask 255.255.255.0 {

option bootfile-name "http://192.168.69.1/ztp.py"; }

For the Cisco IOS DHCP Server:

ip dhcp pool ZTP_DEMO

network 192.168.69.0 255.255.255.0

option 67 ascii http://192.168.69.1/ztp.py

In these examples option 67 points to a HTTP URL and a Python script. When the device see’s the python file in the option 67 then it downloads and executes it during bootup when no other configuration is present and without any manual user intervention. Pretty neat!

Next let’s take a look at a packet capture, which shows interesting things.

You might notice that the Client Identifier (option 61) is not consistent between DHCP Discovers. This is not a mistake, or multiple devices, but actually the intended behavior. Since IOS XE 16.8 the Client Identifier alternates between the device serial number, and the MAC address of the management port. When the DHCP server issues the DHCP Offer in packet 8, the bootfile-name (option 67) is set to http://192.168.69.1/ztp.py – This is the Python script that will be downloaded and run once the device is ready.

Once the device is ready?

Yes, a few things need to happen before the Python script can run. Specifically, the Guest Shell container must start up. This is a linux container that runs within the IOS XE platform. This container has limited access to the IOS XE subsystem. This means that if you decide to run a crypto-miner within Guest Shell (not recommended!) that the IOS XE device will still handle the routing and switching of packets without any problems as the resource allocations for Guest Shell are separate from those responsible for the core capabilities of the device.

The power of the CLI, now with the flexibility of Python

Guest Shell does have some nice feature, specifically the Python API. This API allows the Guest Shell to send commands to the IOS XE operating system. What kind of commands? Show commands are supported with the Python CLI module cli.cli. Show commands are great for displaying information about the device, but are limited when it comes to making device configuration changes. To really harness the power of the Python API, the cli.execute and cli.configure modules provide a great deal of flexibility when it comes to device configuration. We can interact with the device through Python using the traditional “configure terminal” (“conf t”) interface and even send exec commands as needed. All the power of the CLI, now with the flexibility of Python.

So our container has started, and it knows which file to access. Lets look at an example ztp.py script next.

This simple Python script sets the Vlan1 interface IP, enables some AAA settings, turns on the NETCONF-YANG programmatic interface, and creates a user. After, it prints the output to the screen, so that if we have a console cable connected we get visual confirmation that the device has been set successfully.

This device has now been automatically configured using ZTP. It received an IP address on the management port from DHCP, the Python configuration file from a webserver via DHCP option 67, it started up Guest Shell container and configured remote access. We are now free to carry on with our day-to-day tasks as the device is online and in a known and managed state.