Protecting assets requires a Defense in Depth approach

Protecting assets within the enterprise requires the network manager to adopt automated methods of implementing policy on endpoints. A defense in depth approach, applying a consistent policy to the traditional firewall as well as policy enforcement on the host, takes a systemic view of the network.

Value added resellers are increasingly helping customers deploy solutions comprised of vendor hardware and software, support for open source software, and developing code which integrates these components. A great example of this, and the subject of this blog, is World Wide Technology making its integration with Ansible Tower and Cisco Tetration available as open source through Cisco DevNet Code Exchange.

I started my career in programming and analysis and systems administration, transitioned to network engineering, and now the projects I find most interesting require a combination of all those skills. Network engineers must view the network in a much broader scope, a software system that generates telemetry, analyzes it, and uses automation to implement policy to secure applications and endpoints.

The theme for Networkers/CiscoLive 1995 was ‘Any to Any.’ However, we don’t live in that world today. Then, the focus was to enable communication between nodes. Today, the focus is to enable network segmentation, restricting communication between nodes for legitimate business purposes. Today we ask, “What’s on my network?” “What is it doing?” and “Should it be?”

A Zero Trust security model, in the data center and on the endpoint, is a common topic for discussion for our customers. The traditional perimeter security model is less effective as cyber security attacks simply bypass firewalls and attack internal assets with phishing exploits. The Tetration Network Policy Publisher is one means to automate policy creation.

Tetration Network Policy Publisher

Introduced in April 2018, the Tetration Network Policy Publisher is an advanced feature enabling third parties to subscribe to the same policy applied to servers by the Tetration enforcement agent.

The Tetration cluster runs a Kafka instance and publishes the enforcement policies to a message bus. Unlike other message bus technologies, Kafka clients explicitly ‘ask’ for messages, by subscribing to a Kafka topic. Access to the policy is provided by downloading the Kafka client certificates from the Tetration user interface.

The enforcement policy messages are encoded as Google Protocol Buffers (protobuf), an efficient and extensible format for exchanging structured data between hosts. While more complex for the programmer, protobufs are both efficient on the wire and CPU compared to JSON or XML.

This feature enables ‘defense in depth’. The enforcement policy can be converted to the appropriate network appliance configuration and implemented on firewalls, router access lists, and security enabled load balancers. Ansible is one means to automate pushing policy to network assets.

Ansible Tower by Red Hat

Ansible Tower is a web-based solution which makes Ansible Engine easier to use across teams within an IT enterprise. Tower includes a REST API and CLI for ease of integration with existing tools and processes. Ansible Engine is built on the open source Ansible project. Red Hat licenses and provides support services for Ansible Tower and Engine.

Ansible has evolved into the defacto automation solution for configuration management across a wide range of compute, storage, network, firewall and load balancer resources in the cloud and on-premise.

Ansible interface to Cisco Tetration Network Policy Publisher

This project provides an abstraction layer between the Tetration Network Policy Publisher and Ansible, allowing the network administrator to push enforcement policy to ‘all the things’, without directly accessing the Tetration Kafka message bus. The code is open source and is publicly available through Cisco DevNet Code Exchange, a curated set of repos that make it easy to discover and use code related to Cisco technologies. The project repository, ansible-tetration, includes an Ansible module that retrieves enforcement policy from Tetration and exposes it as variables to an Ansible playbook. Subsequent tasks within the playbook can apply the policy to the configuration of network devices.

This functionality provides value to network operations as policy is published periodically, in an easily consumed format. NetDevOps engineers can focus on implementing policy without the need to understand the complexity of creating the policy.

Figure 1 illustrates the components of this solution.

Figure 1- Ansible interface to Cisco Tetration Network Policy Publisher

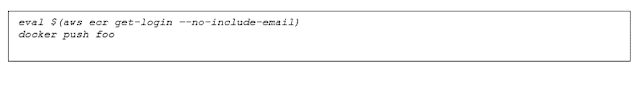

Links to additional resources of this project are available on Code Exchange.

Simplify the Complex

Within IT operations, forming NetDevOps teams to integrate disparate systems is the model for high-performance organizations. While encouraging each team member to have general level of understanding of the organization’s goals, it is also important to include specialists in a technology, to leverage their deep experience in one area to increase the velocity of the team.

The project goal is to expose policy generated by Tetration in a simple format a network operator can use to enable defense in depth within their datacenter.

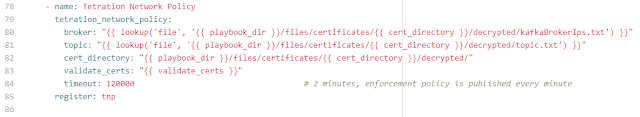

Figure 2 illustrates how the Python module tetration_network_policy, can be included in an Ansible playbook, retrieve a security policy and register the results as a variable.

Figure 2- Tetration Network Policy task

In this example, the variable tnp contains the Tetration Network Policy (inventory_filters, intents, tenant name and catch_all policy) for the requested Kafka topic. Subsequent tasks can reference these values and apply the security policy to network devices.

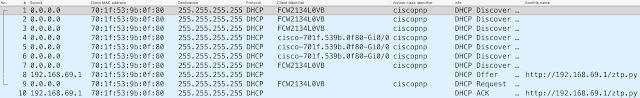

Figure 3 illustrates the contents of variable tnp, which will be used to generate access control lists on an ASA firewall.

Figure 3- Sample JSON formatted network policy

By exposing the policy to an Ansible playbook, the data can be easily reformatted to a traditional CLI configuration and applied to a firewall, load balancer or Cisco ACI fabric.

Use Case: Apply Policy to ASA Firewalls

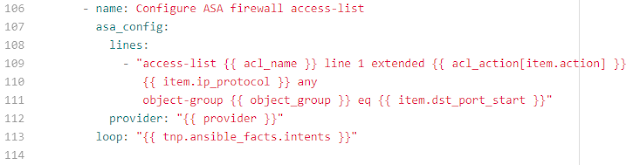

Now that the network policy has been retrieved and loaded to a variable within the playbook, it can used to configure a network device. In this example, the target device is a Cisco ASA firewall.

By invoking the Ansible module asa_config, the network policy is used to create the appropriate CLI commands and apply them to one or more firewalls defined in the inventory.

Figure 4 illustrates the playbook syntax.

Figure 4 – Ansible module asa_config

Following the execution of the playbook, the sample JSON formatted network policy is present in the firewall configuration, as shown in Figure 5.

Figure 5 – ASA configuration

Note: Because SNMP is a well know port, the ASA CLI has substituted SNMP to reference port 161.