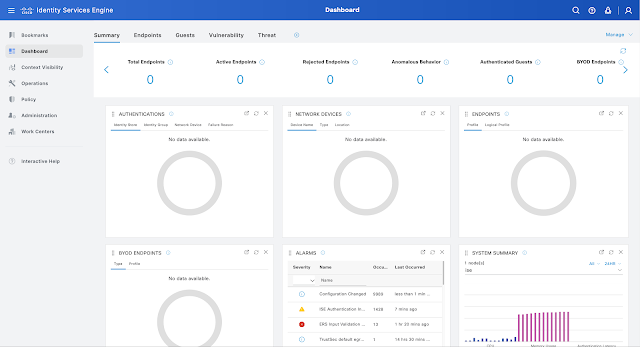

If you were at Cisco Live 2023 in Las Vegas, you surely saw that Cisco announced a lot of new products. One of these new products was the update to Cisco Identity Services Engine (ISE 3.3).

Every network admin or security operator has the same issue: you’re trying to enhance your network’s security, while adding visibility and boosting efficiency, all without sacrificing flexibility. In other words, you want more features without the complications. Cisco ISE 3.3 has that.

Split Upgrade and Multi-Factor Classification adds flexibility

When it comes to flexibility, Cisco ISE 3.3’s Split Upgrade feature will change the way you look at ISE upgrades. Customers can be hesitant to update to the newest version of Cisco ISE, because it can take a long time for ISE nodes with large databases to complete the upgrade. Split Upgrades is a new process that is less complex, as files are downloaded before upgrades and prechecks are done. Split Upgrade gives you better control on which ISE nodes to upgrade at any given time, without any downtime.

Cisco Identity Services Engine (ISE) Dashboard

Another feature in Cisco ISE 3.3 provides a way to easily identify clusters of unidentified endpoints found on the network. These endpoints are unidentified because oftentimes a variety of endpoints connect to the network that are not directly provisioned by IT. This feature uses AI/ML Profiling and multi-factor classification (MFC) to quickly identify clusters of identical unknown endpoints via a cloud-based ML engine. From there, the devices can be reviewed by proposed profiling policies via the ML engine and have the devices labeled as either MFC Hardware Manufacturer, MFC Hardware Model, MFC Operating System and MFC Endpoint Type.

By placing the unidentified device into one of these four buckets, Cisco ISE has taken a big chunk of guessing what goes where out of the equation. From there it’s easier for the customer to determine what the endpoints are and what policies should govern them when on the network.

Unique to Cisco: Wi-Fi Edge Analytics

A Cisco-only feature called Wi-Fi Edge Analytics will allow network admins to mine data from Apple, Intel and Samsung devices to better improve profiling. Cisco Catalyst 9800 wireless controllers will pass along endpoint-specific attributes, such as model, OS version, firmware, among others, to ISE via RADIUS. From there this information will be used to profile common endpoints found on the network. Network Admins will now have more data allowing them to create more defined profiles. The more information that is at the fingertips of the admin, the more precise the profile.

Even More Flexibility with Controlled Application Restart

To increase efficiency, predictability and reduce downtime, Cisco ISE 3.3 offers Controlled Application Restart. It benefits customers by saving them time and eliminating a lot of the headaches that come with managing ISE admin certificates. Customers are now given the ability to control the replacement of the ISE administrative certificate allowing them the ability to plan for maintenance once their current certificate expires. Prior to this new feature, a certification replacement required a complete reboot of all the PSNs in the deployment without the ability to know or control the order to the reboot, which can cause some admins to allow the certification to lapse.

Changes to certificates require a restart since it affects systemwide configuration and cannot be done during operational hours since it requires significant downtime. However, Cisco ISE 3.3 now provides flexibility for these certifications to be scheduled the restart at the network admins’ convenience; during the middle of the night or on weekend when network usage is low. This eliminates the need for that downtime and helps to smooth security updates without disruption.

Controlled Application Restart is a response to an industry trend where customers are moving to a short-term certificate due to added security. This new feature is beneficial as the maintenance needed to update the certification—which can take upwards of 30 minutes per certificate—can be scheduled for the middle of the night, when network use is low, saving both time and resources.

Improved Insights with pxGrid Direct Visibility

pxGrid Direct Visibility has improved visibility from the last iteration of Cisco ISE (ISE 3.2) and now customers get improved endpoint attributes via external databases such as Service Now. These attributes can now be shown in Context Visibility. Whether the data comes from endpoints, users, devices or which apps are running over the network and its different attributes, it provides a lot of information such as the device type, device owner and other things like whether the device is operational.

Getting this endpoint data in an easily accessible fashion allows you to make better network decisions based on facts. This data can then be spun to run the network in a more efficient manner allowing for a safer network and less time spent on translating information.

Tougher Security with the TPM Chip

The new TPM Chip (for supported hardware) is a response to the need for increased security. Found on the new SNS-3700 models and in some virtual environments (in a form of Virtual TPM), the TPM chip is a dedicated chip where sensitive information can be stored. Previously if Cisco ISE used a password to connect to a database, it was stored in the file system, which is less secure. But now with the information housed on the physical TPM Chip, and with the ability to create true random numbers for key generation, it has proven to be more difficult to access thus providing a more secure place for information to be stored.

With the number of new features and functionality that comes to you with the latest Cisco ISE 3.3 update, your network’s security will be enhanced, and you will notice an increase in efficiency and visibility.

Cisco ISE 3.0 Overview Demo

Source: cisco.com